Xen.org is pleased to announce the release of Xen 4.2.0. The release is available from the download page:

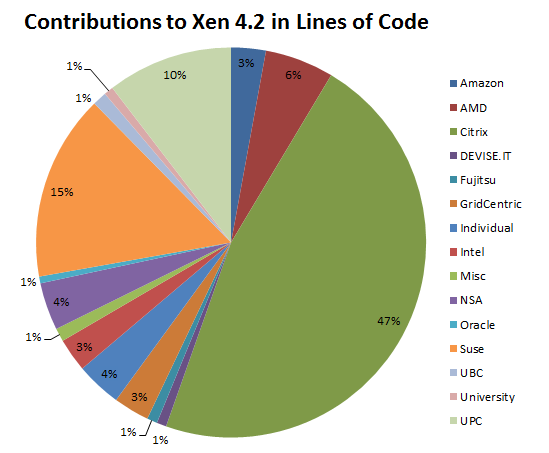

This release is the culmination of 18 months and almost 2900 commits and almost 300K lines of code of development effort, by 124 individuals from 43 organizations.

New Features

The release incorporates many new features and improvements to existing features. There are improvements across the board including to Security, Scalability, Performance and Documentation.

XL is now the default toolstack: Significant effort has gone in to the XL tool toolstack in this release and it is now feature complete and robust enough that we have made it the default. This toolstack can now replace xend in the majority of deployments, see XL vs Xend Feature Comparison. As well as improving XL the underlying libxl library has been significantly improved and supports the majority of the most common toolstack features. In addition the API has been declared stable which should make it even easier for external toolstack such as libvirt and XCP’s xapi to make full use of this functionality in the future.

Large Systems: Following on from the improvements made in 4.1 Xen now supports even larger systems, with up to 4095 host CPUs and up to 512 guest CPUs. In addition toolstack feature like the ability to automatically create a CPUPOOL per NUMA node and more intelligent placement of guest VCPUs on NUMA nodes have further improved the Xen experience on large systems. Other new features, such as multiple PCI segment support have also made a positive impact on such systems.

Improved security: The XSM/Flask subsystem has seen several enhancements, including improved support for disaggregated systems and a rewritten example policy which is clearer and simpler to modify to suit local requirements.

Documentation: The Xen documentation has been much improved, both the in-tree documentation and the wiki. This is in no small part down to the success of the Xen Document Days so thanks to all who have taken part.

You can find more information in the release notes and feature list on the wiki.

Upstreaming

The Xen project continues to work closely with our upstreams.

Of particular note in this release cycle is the upstreaming of the HVM device model support into upstream qemu. After the Linux dom0 support (merged upstream in 3.0 in the 4.1 release cycle) the qemu-derived device model was the largest remaining piece of code which required upstreaming. Support for Xen was merged into upstream prior to the qemu 0.15 release and is supported as an option using the XL toolstack. It will become the default in 4.3. Alongside this support we have also gained support for SeaBIOS (a cleaner and more maintainable legacy BIOS, used by default when upstream qemu is selected) and Tianocore/OVMF (a UEFI BIOS). Support for Xen has been merged into the upstreams of both of these projects during the Xen 4.2 development cycle.

More Information

Links to useful wiki pages and other resources can be found on the Xen support page.

Thanks

Contributions were made to this release by 124 individuals from 43 organizations, and that’s not counting contributions to external projects such as the BSDs, Linux or qemu. Many thanks to everyone who contributed to this release, either through code, testing, documentation or in any other way.

The diagram below shows organisations which contributed more than 1% in lines of code to the Xen 4.2 release. Several items in the diagram discribe groups of people or organisations: Individual covers contributions by individuals whose affiliation is unknown, Misc covers contributions by commercial organisations which did not go above 1% individually and University covers contributions by Universities which did not go above 1% individually.

I did also want to list the top 20 contributors to Xen 4.2 (in terms of commits/and lines of code). These are: Jan Beulich (338 commits/40357 LOC), Roger Pau Monne (87/36932), Ian Campbell (504/32009), Stefano Stabellini (124/29130), Ian Jackson (174/27900), Daniel De Graaf (79/11103), David Vrabel (45/11075), Tim Deegan (143/8790), Christoph Egger (67/8590), Matt Wilson (9/8508), Andrés Lagar-Cavilla (115/8050), Keir Fraser (143/5593), Wei Wang (34/5577), Anthony Perard (45/5289), Olaf Hering (154/4296), Qing He (20/4179), George Dunlap (75/4088), Dario Faggioli (30/3742), Shriram Rajagopalan (21/3481) and Jonathan Davies (3/3230).

For a complete breakdown see the Acknowledgement page. A big thank you again!